Open Policy Analysis

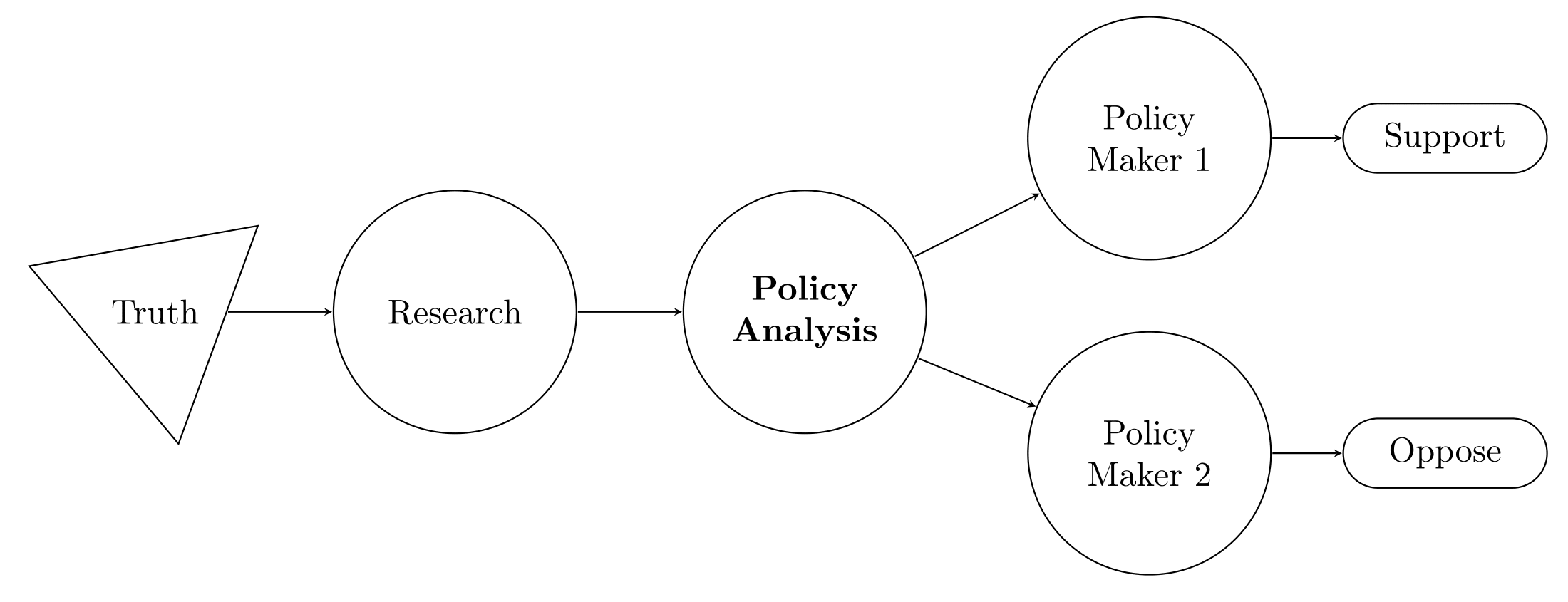

In the last two decades, governments and researchers have placed a growing emphasis on the value of evidence-based policy. However, while the evidence generated through research to inform policy has become more rigorous and transparent, policy analysis — the process of contextualizing evidence to inform specific policy decisions (depicted in Figure 1) — remains opaque. BITSS recognizes that there is significant room for improvement in terms of how evidence is used in policy reports, as well as potential efficiency gains from increasing reproducibility and automation.

BITSS supports Open Policy Analysis (OPA), an approach to policy analysis wherein data, code, materials, and clear accounts of methodological decisions are made freely available to facilitate collaboration, discussion, and reuse. OPA adapts and applies cutting-edge tools, methods, and practices commonly used for transparency and reproducibility in scientific research.

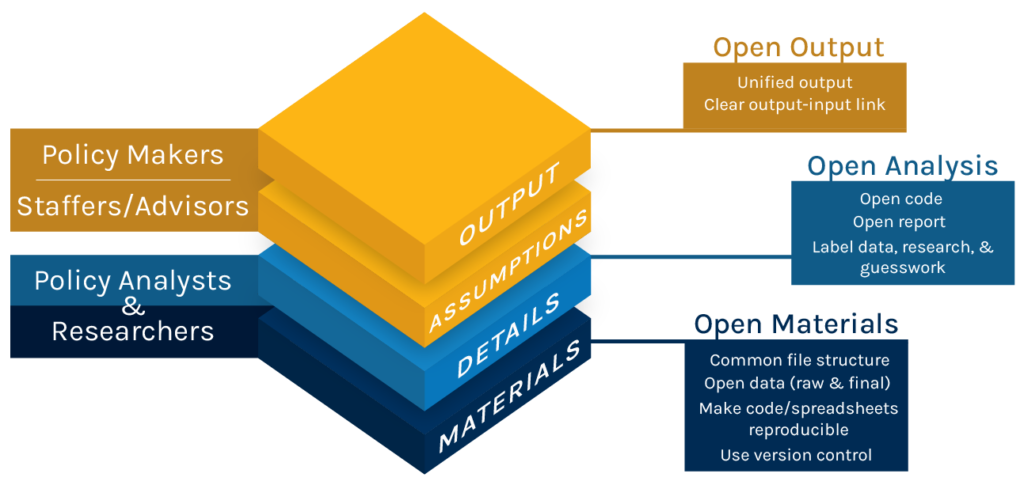

OPA is based on the following principles:

- Open output: The analysis should clearly pre-specify the output that will inform policy makers, and identify the preferred set of estimates. Additionally, this principle entails properly communicating the underlying uncertainty and how the results vary with the underlying assumptions.

- Open analysis: All elements of the analysis should be easily accessible and readable for critical appraisal and improvement. This includes disclosing all methodological procedures and underlying assumptions behind the report.

- Open materials: All materials (raw data, code, and supporting documents) should be made publicly available to allow a policy report to be reproduced in its entirety with minimal effort.

Figure 2 below shows how OPA principles can be implemented in practice, including the relevant audience for each output (on the left), the corresponding output (in the middle) and proposed transparency tools and practices to implement them (on the right). To see what OPA looks like in practice, have a look at our Projects page.

In heavily contested debates, such as the effects of minimum wage policies, OPA can introduce clarity in how evidence is used by stakeholders on both sides of the debate. This then makes it possible for consumers of policy analysis to understand and critically assess the merits of different policy alternatives.

BITSS develops and tests tools, practices, and community standards for OPA, and supports policy analysis organizations in operationalizing OPA. If you are a policy analyst or conduct policy-relevant research, you can get involved in three ways. First, you can become a signatory of the OPA Guidelines by adding your name (or the name of your organization, if you sign as an organizational representative) to this form. Second, you can submit your feedback or become a collaborator on the OPA Guidelines. Finally, you can implement OPA at your organization or apply it to a specific policy report, and include a statement that the policy report was developed in accordance with the OPA Guidelines (e.g., “This analysis complies with level 3 of the Open Policy Analysis (OPA) Guidelines developed by BITSS”).

If you would like to collaborate with BITSS to develop a demonstration of OPA in your organization or a particular policy report, please contact the BITSS Program Manager.

Find more OPA promotional resources here.